Agent vs Harnessed Agent

Claude Code is great for interactive exploration. Once you need long-running, recoverable, auditable agent execution, code-level control becomes much harder to avoid.

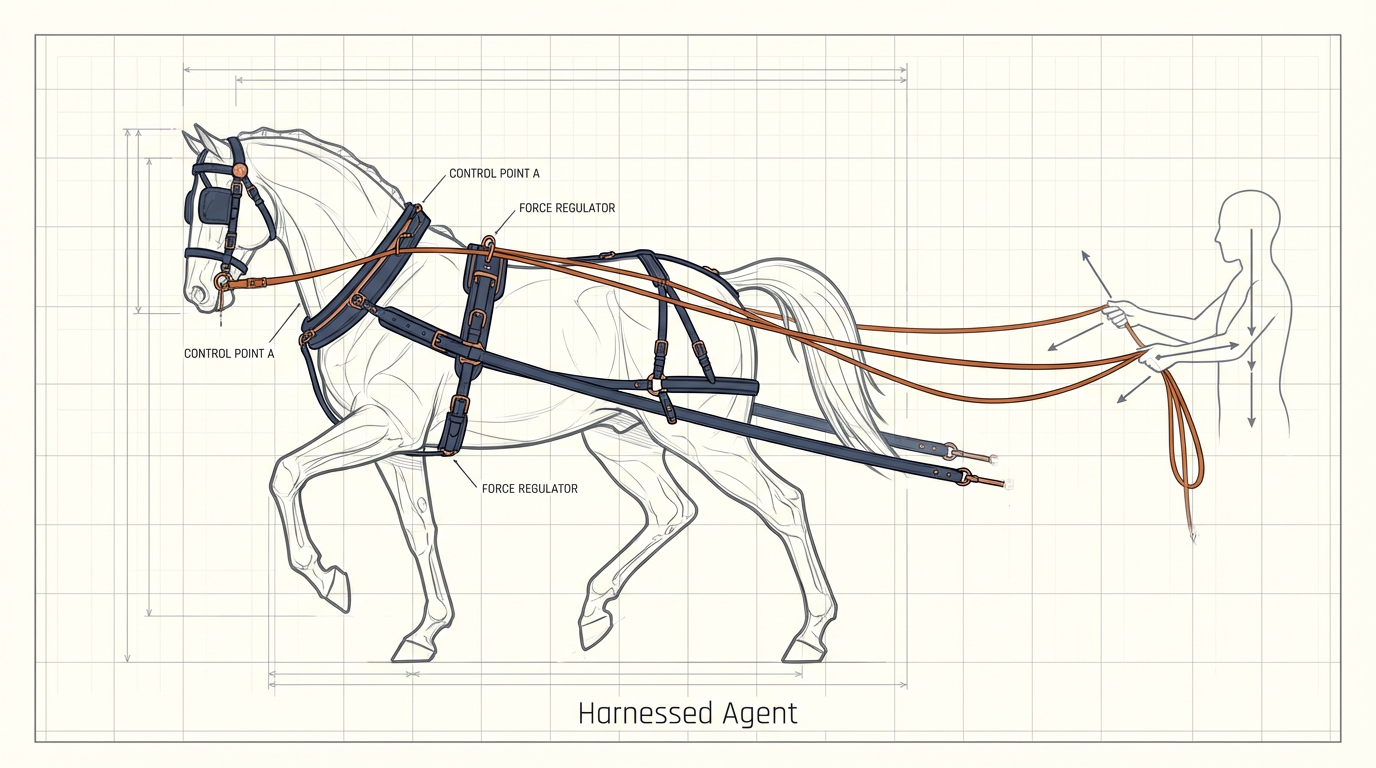

Once you start thinking about agents in production, the same question keeps coming back: what actually keeps the agent under control?

It helps to define the word first. A harness is literally the gear used to control and direct a horse. In the agent world, the meaning is similar: you are not weakening the agent, you are putting a strong agent inside a controlled execution structure.

A Harnessed Agent is just an agent whose autonomy is wrapped in code-level boundaries. It can keep moving on its own, but it does so inside rules, state mechanisms, and recovery paths that someone actually built.

I Initially Thought This Could Stay Lightweight

At first I thought harnesses could be built very lightly.

My assumption was that Claude Code, a filesystem, and a good CLAUDE.md file might already be enough to support multi-agent coordination, workflow management, and state persistence.

After trying it for real, I changed my mind.

You can absolutely sketch a lightweight harness with prompts and files. But once the system needs to run for a long time, recover from failure, stay auditable, and remain stable over time, code becomes the real control layer.

Now I think of the two approaches as tools for different stages:

Claude Code: heavy human intervention, strong back-and-forth, good for PoCs and manual debuggingAgent Harness: weaker human intervention, clearer end-to-end execution, better fit for production pipelines or CI/CD integration

The Differences Show Up Once You Actually Run It

Runtime length

The longest Claude Code task I ran lasted around 40 minutes.

A code-driven harness, by contrast, ran for 8 hours in one of my tests. That does not prove the output is better, but it does show that long-running execution changes the problem. An interactive session agent and a harnessed system stop being the same thing.

Resume after failure

If Claude Code gets interrupted, a human usually has to step in and get the task moving again.

A harness can treat interruption as part of the runtime model. In one of my tests, minimax hit a token-plan limit inside a five-hour window. The workflow did not collapse. It waited for the limit to clear, then resumed from the breakpoint automatically.

State management

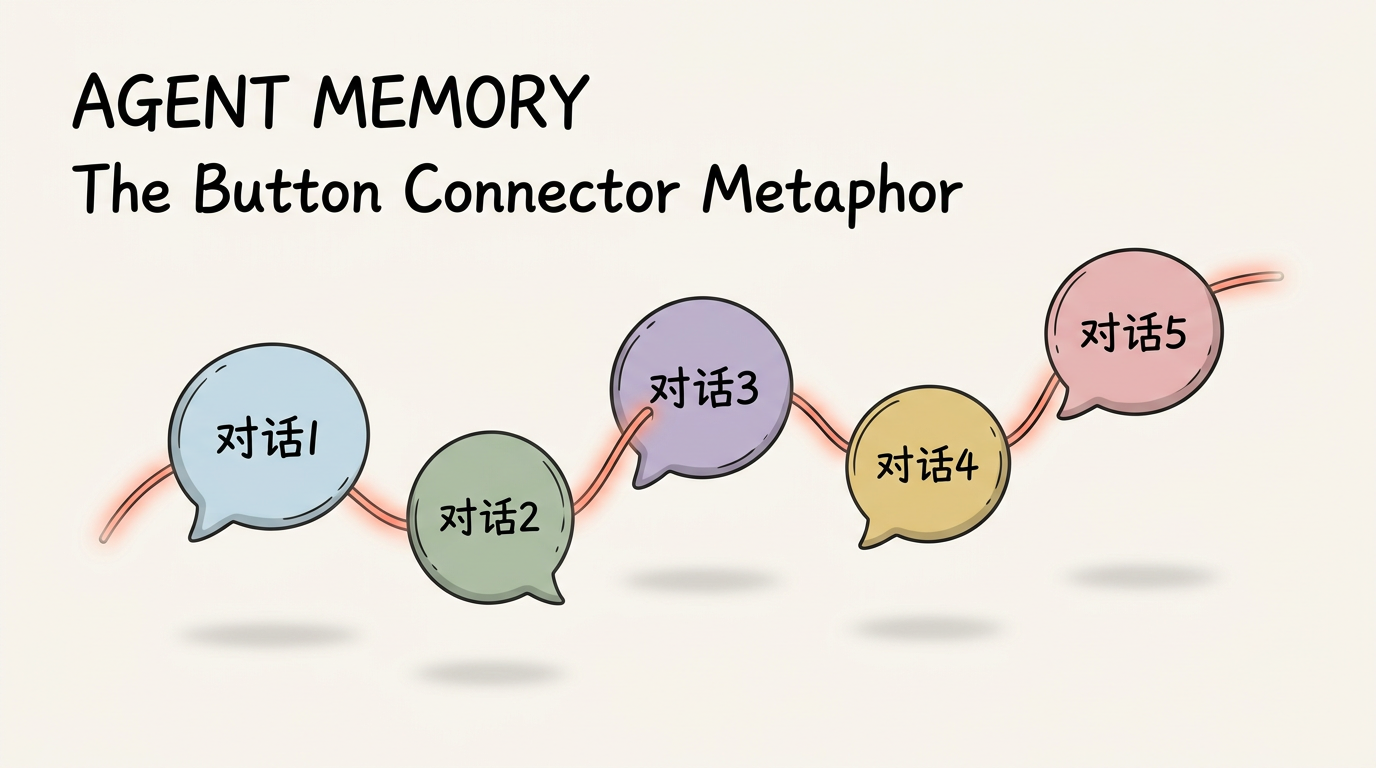

Claude Code can use the filesystem for state too, but once a session runs for a long time, the context grows, compaction happens more often, and important information becomes easier to lose.

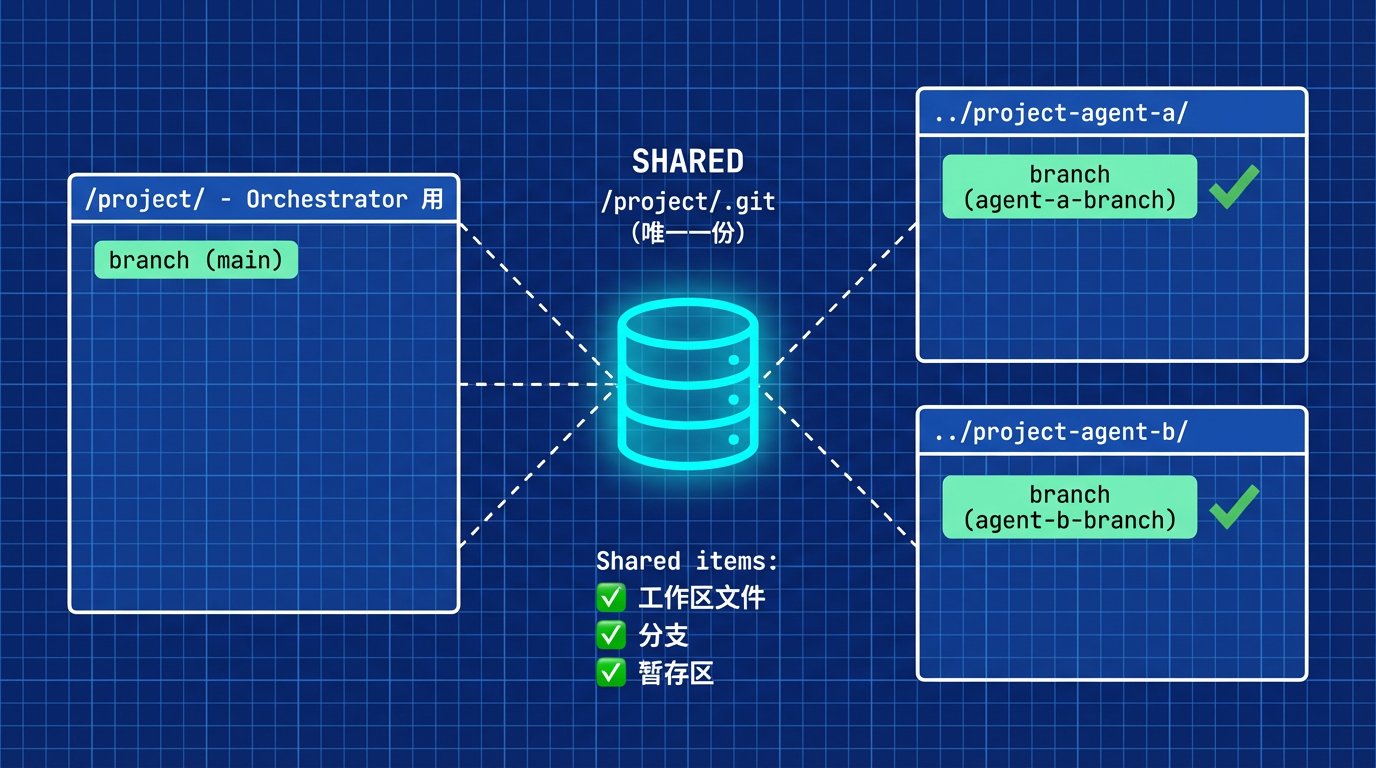

A coded harness can be much stricter here. Each session gets a fresh context window, while state moves through files and snapshots instead of accumulating inside one giant conversation.

Auditability

Interactive agents such as Claude Code tend to finish a large chunk of work and commit later.

A harness can enforce a narrower workflow: split the task, do one feature at a time, commit immediately, then move to the next unit. That naturally produces a Git history that is easier to inspect.

Looking Closely At autonomous-coding

Anthropic's autonomous-coding demo made this feel much more concrete to me.

What I care about here is that code is doing the constraint work.

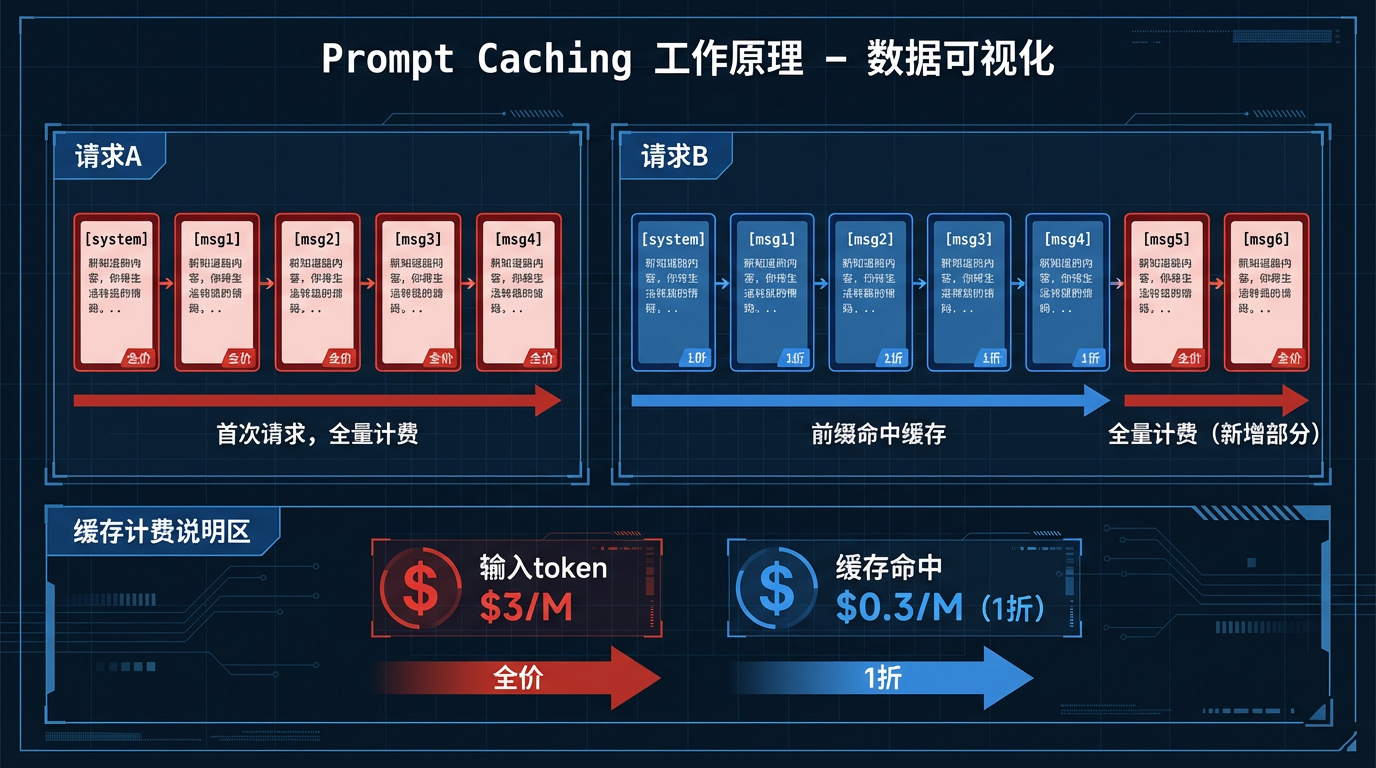

Prompt-only control is expensive to maintain. Even when it works once, it is hard to make it behave the same way over time. Code gives you somewhere to lock those constraints into the execution path.

Its flow is straightforward:

- An init agent turns the request into a feature list, prepares tests, initializes the repo, writes setup scripts, and creates progress tracking

- A new session starts, and a coding agent enters a

coding -> test -> debug -> update progressloop while working on exactly one feature at a time

After each feature is done, the code advances the process into the next session until the backlog is cleared.

The Two Constraints I Care About Most

- the coding agent handles one feature at a time

- every session starts with a fresh context window

Those two choices support each other.

Models naturally pay more attention to what appears near the beginning and the end of context. If you keep everything inside one long session, instructions drift and the signal gets diluted. If each coding run starts fresh and only loads the current task plus a file-based state snapshot, the task stays much sharper.

It is aimed at one problem in particular: context explosion inside a single conversation.

Suppose Session 1 produces 50 features and Session 2 begins:

Traditional approach:

Context = [all Session 1 history] + [new Session 2 content]

= 50 features worth of implementation detail + the next task

= context overload, important signals get buried

autonomous-coding approach:

Context = [current session prompt] + [read feature_list.json]

= current task + full state snapshot

= cleaner context, higher signal density

After Running the Demo, My View Got More Specific

I already do a lighter version of this by hand when I use Claude Code. If I am implementing two unrelated features, I usually open separate sessions so the contexts do not bleed into each other. autonomous-coding turns that habit into system design.

I tested the demo with minimax on a pixel-art dungeon roguelike. The run lasted about eight hours. The generated game was not empty either: it had combat, an economy loop, and even a key-and-door mechanic.

It was not a production-grade result, though. The overall depth was limited, both in UI/UX and in gameplay. Part of that comes from product design quality upstream, so there is still plenty of room to push the system further.

Code-constrained agents can run long tasks. That much seems real. But runtime length does not tell you much about code quality or product complexity by itself.

From there, the work shifts to a different set of questions:

- How do you raise the quality of the multi-agent harness itself?

- How should it collaborate with products like Claude Code instead of replacing them?

- How do you plug it into an existing CI/CD pipeline?