How I Think About Agent Memory

I prefer agent memory on demand, not by default. The real difficulty is deciding what to keep, when to keep it, and how to stop memory from turning into noise.

I have been thinking about agent memory a lot lately, and my view is fairly simple: use memory when it saves real repeated work. Do not treat it as a default feature every agent must have.

Memory is an old topic, but tools like openclaw have pushed it back into the spotlight. A lot of people instinctively assume that an intelligent agent should have memory. I think that is the first misconception.

If an agent without memory already satisfies your everyday needs, that is perfectly fine. Memory is not an automatic requirement.

What Memory Changes

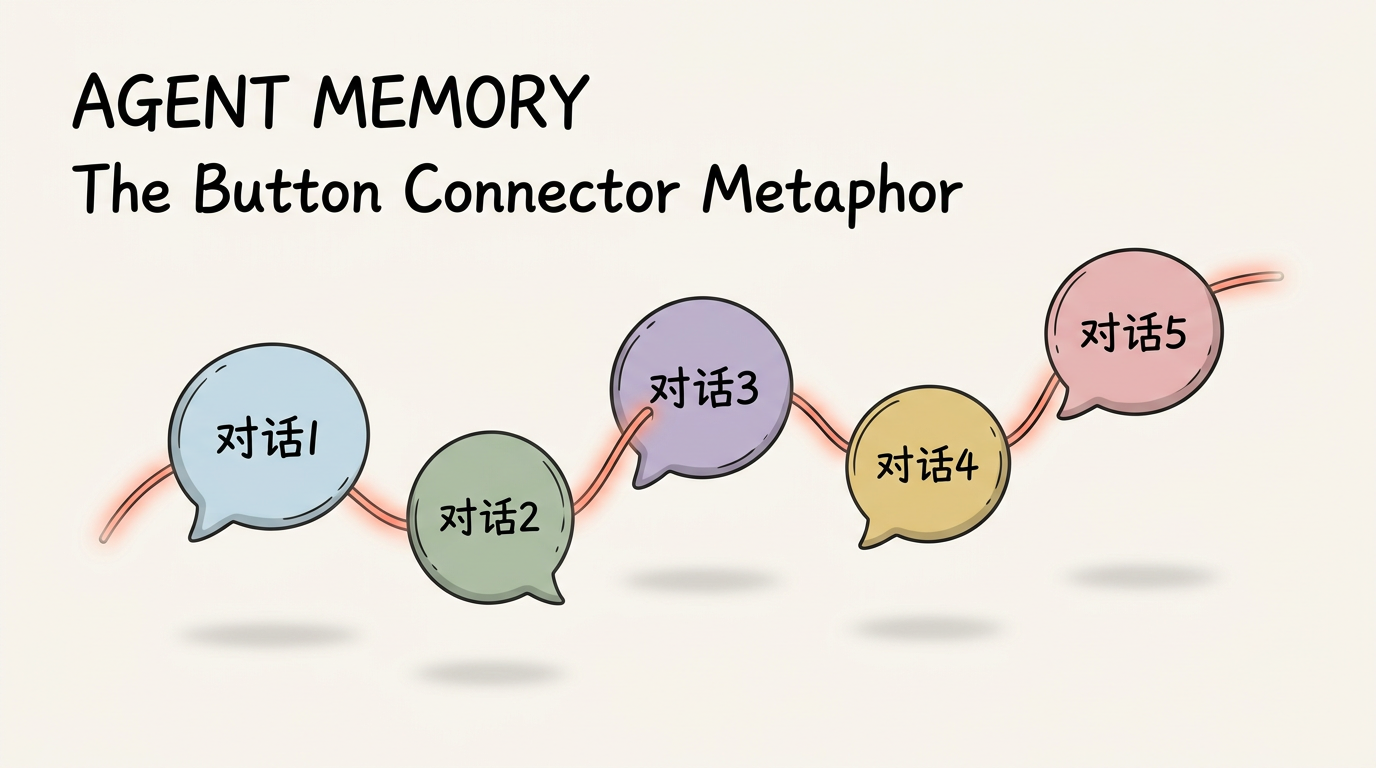

It connects separate conversations

Most of our interactions with an agent are just question-and-answer exchanges.

Each new conversation creates a new isolated "button." What MEMORY.md does is thread those buttons together. Once that thread exists, a new conversation can pull in key information that was created somewhere else.

It removes repeated setup

Why bother doing that at all?

Because without memory, every conversation starts from zero. That means there is always an initialization cost, and for the user that usually means retyping prompts they have already written many times before.

There is nothing magical about that. It just removes repetition.

So you only really need memory when you have a genuine need to connect information across sessions. Otherwise, a chatbot is often enough.

Where Automatic Memory Starts To Fail

I have been using openclaw recently. Its underlying architecture is based on Claude Code, so you can also find a MEMORY.md file inside Claude Code projects.

The appeal of openclaw is clear: it tries to automate memory. I do not think the experience is there yet, mainly for two reasons:

- it is not stable enough

- it writes too much, and too indiscriminately

Stability

This is relative, not absolute.

If you explicitly tell an agent to remember something by writing instructions like "remember:" in the prompt, then most state-of-the-art agents will do a decent job.

But if you do not make that intent explicit, whether the system remembers anything useful is much less predictable.

Too much noise

openclaw first writes daily activity into memory and then organizes it into MEMORY.md.

That sounds convenient, but if you have not clearly defined what should be remembered and what should not, MEMORY.md eventually becomes messy and bloated. Then you start paying the real costs:

- more context usage

- more context pollution

- more important details getting buried under noise

The Questions I Actually Care About

To me, the real discussion should come back to two questions:

- Do I actually need automatic memory?

- What exactly is worth remembering?

For my own workflow, automatic memory is not essential.

If someone solves those two problems cleanly, I would use it. Everyone wants their own Jarvis.

I already have a fairly clear sense of what is worth storing and when to capture it, so I do not mind triggering precise memory by hand.

For me, a sense of control over the agent matters more than automation by itself.

So my workflow looks like this:

- Build a

session-reflectskill to deeply review sessions that are worth preserving - Extract concrete outputs such as problems found, lessons learned, and how to improve

- Write only that distilled value into

MEMORY.md

Manually triggering that process when needed is a small cost. The result is cleaner memory with less junk and less drift.

How memory should be handled depends on the product.

If you are building a companion-style agent, automatic memory is part of the job. If you are building a tool that helps someone work, the tradeoff can be very different. The gap between products is rarely "memory" versus "no memory." It is the quality of the memory policy underneath.